Choosing, Managing, And Evaluating A Penetration Testing Service

[The following is excerpted from “Choosing, Managing and Evaluating a Penetration Testing Service,” a new report posted this week on Dark Reading’s Vulnerability Management Tech Center.]

Hiring a security consulting company to perform penetration testing can make a company more secure by uncovering vulnerabilities in security products and practices — before the bad guys do. It can also be an extremely confusing and frustrating experience if deliverables don’t meet the needs or requirements of the business units. Understanding and properly managing relationships with outsourced security providers can be the difference between an expensive mistake and a well-executed exercise in security risk management.

To establish and maintain an effective relationship with a security consulting firm, one thing is needed above all: communication. Clear, concise and meaningful communication between your organization and your chosen vendor will absolutely affect the level of service and value on the deliverable side of the engagement.

And clear communication requires a firm understanding of the entire process of working with a consulting firm — from contract to payment and everything in between. Knowing what goes into and influences each of these steps, and having a firm set of reasonable expectations, will go a long way toward ensuring that there are no surprises along the way.

Before any discussion about developing effective relationships with security consultants can take place, it’s important to define what “penetration test” really means. You may think you know what a penetration test is, but the definition of the practice and its variables has changed in recent years.

A decade ago, a penetration test was generally a “black box” test that took place at the network level. Security researchers were given no details about the network they had been hired to attack, and, as the attack targeted the network level, the researchers usually attacked ports, services, operating systems and other components that comprise the lower layers of the OSI model. Indeed, the OSI model, antiquated as it may seem, offers a good way to define the scope of a penetration test.

There are several categories of penetration test, and each requires different levels of management and coordination.

1. Black-box testing

Black-box testing is performed by an attacker who has no knowledge of the victim’s network technology. While pen testers can certainly still provide this type of testing, the model isn’t used as often as it once was because attackers are now sophisticated enough that they will probably know a vast amount about your technology in advance of an attack.

2. White-box testing

White-box testing usually involves close communication and information sharing between your technology group and pen testers. Pen testers are typically supplied with legitimate user accounts, URLs, and even user guides and documentation. This type of penetration test will usually provide the most comprehensive results and is currently the most commonly requested.

3. Gray-box testing

As you might imagine from the name, gray-box testing is a mix of black-box and whitebox testing. With gray-box testing, pen test customers don’t hand over the company jewels but do provide testers with some information. This might include credentials or access to a corporate intranet site.

Companies will have other things to consider when determining the scope of the pen test they will undergo. The first is whether to secure services that include social engineering assessment.

When you look at security, one of the biggest risks is people. Indeed, the end user is commonly considered the weakest link in computer security. Most security consultancies will be able to assess the ability of your users to, say, detect phishing schemes, but there are some legal and human resources issues that must be considered before including social engineering as part of your pen testing suite of services.

For detailed recommendations on how to select a pen testing service provider — and for some advice on ways to evaluate the service you receive — download the free report.

Have a comment on this story? Please click “Add a Comment” below. If you’d like to contact Dark Reading’s editors directly, send us a message.

Article source: http://www.darkreading.com/choosing-managing-and-evaluating-a-penet/240161596

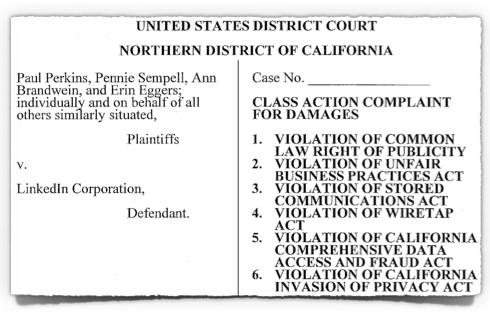

Brian Guan, a Principal Software Engineer at Linkedln (currently on sabbatical) said it all when he

Brian Guan, a Principal Software Engineer at Linkedln (currently on sabbatical) said it all when he

Happy day, USA: When we click “Like” on Facebook, we are now constitutionally protected from getting fired!

Happy day, USA: When we click “Like” on Facebook, we are now constitutionally protected from getting fired!  The fingerprint sensor on Apple’s new iPhone 5s could well be the device-within-a-device that brings biometrics into the everyday mainstream.

The fingerprint sensor on Apple’s new iPhone 5s could well be the device-within-a-device that brings biometrics into the everyday mainstream.