Serial iOS bug finder videosdebarraquito has struck again.

Serial iOS bug finder videosdebarraquito has struck again.

He found a bug in the iOS 6.1.3 lockscreen, almost as soon as that update was published (an irony, given that the main purpose of 6.1.3 was to fix various lockscreen flaws).

Now he’s made a video of himself bypassing the lock on just-released iOS 7.

(I’ve given you more than enough to find the video if you want. But I haven’t provided a direct link here. Call me an old-school wowser. I can take it.)

Lock screens have a chequered security history, with Android having its recent share of problems, too.

The main reason is complexity, one of security’s mortal enemies.

You can understand why some exceptions to a phone lock might be desirable, or even required by the regulators: the ability to call the emergency number, no matter what, for example.

Similarly, a clock is handy when the phone is locked, as well as an indication of whether there’s network service available should you want to make a call.

So some “special case” programming is needed in phone lock software, which inevitably means more to go wrong with the part that implements the actual lock.

But functionality to check whether you’ve just dialled the three digits 112, 999, 000, 911, or some other well-known emergency number, and to update a digital clock once a minute, is a far cry from the feature set implemented by the average lockscreen app on a modern smartphone.

We’re no longer content to have our phones locked: we want them locked, except for a huge raft of features.

Indeed, our terminology even reflects that: we tend to say, “My phone’s at the lock screen,” not, “My phone’s locked.”

In truth, the phone isn’t locked at all – the lockscreen app typically requires and makes extensive use of access to the network and the filing system, plus the ability to interact fully with the user.

Worse, we’re not content with just seeing general information on our lockscreens, like the latest weather and news headlines, but are happy for our “locked” phones to continue disgorge information of a more personal nature, such as posts to your Facebook wall, Tweets we’re mentioned in, and more.

And heaven forfend that we ever have to fumble with the phone lock before we are able to snap a photo!

Apple addressed these issues in iOS 7 with what it describes as a feature, but that I consider a bad idea from the start. (Call me an old-school wowser. I can take it.)

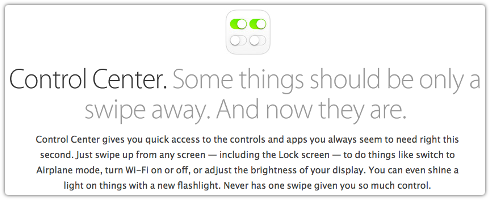

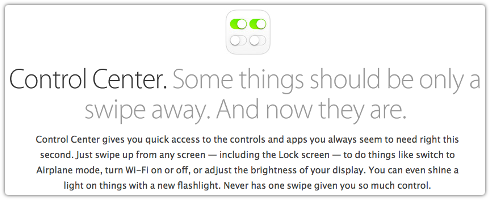

It’s called Control Center, and it flies under the banner that “some things should only be a swipe away. And now they are.”

Control Center gives you quick access to the controls and apps you always seem to need right this second. Just swipe up from any screen — including the Lock screen — to do things like switch to Airplane mode, turn Wi-Fi on or off, or adjust the brightness of your display. You can even shine a light on things with a new flashlight. Never has one swipe given you so much control.

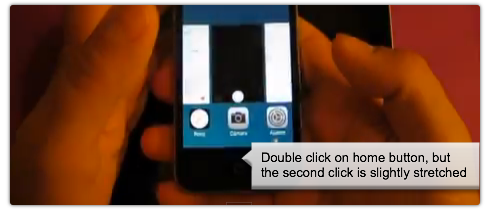

Sadly, that one swipe, combined with some dextrous fingerwork, gives videosdebarraquito so much control that he can access your photos via a backdoor entrance.

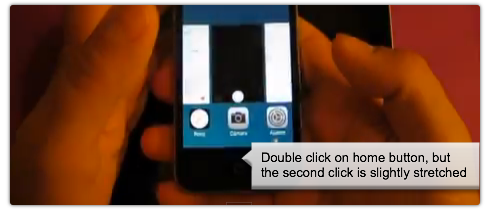

It seems he gets from the lock screen to the control center, from there to the alarm clock, and from there, by means of some deft fingerwork – described in his video as “double click on the home button, but the second click is slightly stretched” – into your photo gallery.

Now he can do whatever you could do with your photos if the phone were unlocked: look at them, delete them, upload them and post them on social networking sites.

Let’s hope that Apple fixes this bug quickly.

In the meantime:

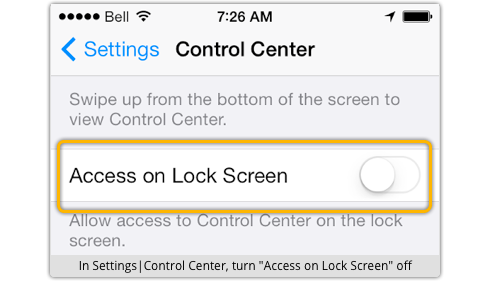

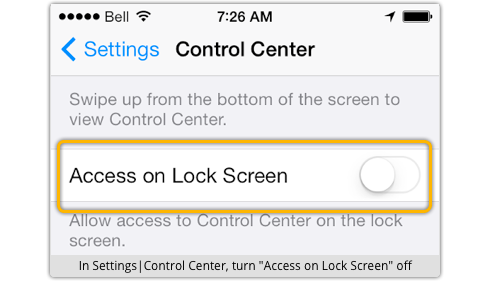

- Reduce the functionality available from the iOS 7 lockscreen, notably turning off access to the control center.

- Don’t take photos of a genuinely personal or private nature on your phone. (Call me an old-school wowser. I can take it.)

Article source: http://feedproxy.google.com/~r/nakedsecurity/~3/thnm89-XIrc/

London’s Metropolitan Police have arrested eight men in connection with a £1.3 million ($2.08 million) bank heist carried out with a remote-control device they had the brass to plug into a Barclays branch computer.

London’s Metropolitan Police have arrested eight men in connection with a £1.3 million ($2.08 million) bank heist carried out with a remote-control device they had the brass to plug into a Barclays branch computer.  Det. Supt. Terry Wilson

Det. Supt. Terry Wilson  Happy day, USA: When we click “Like” on Facebook, we are now constitutionally protected from getting fired!

Happy day, USA: When we click “Like” on Facebook, we are now constitutionally protected from getting fired!  Serial iOS

Serial iOS

The fingerprint sensor on Apple’s new iPhone 5s could well be the device-within-a-device that brings biometrics into the everyday mainstream.

The fingerprint sensor on Apple’s new iPhone 5s could well be the device-within-a-device that brings biometrics into the everyday mainstream.

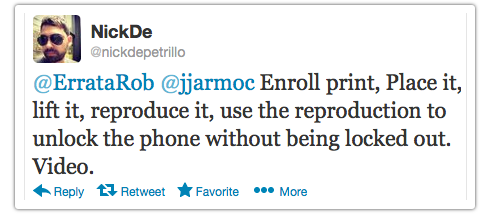

Brian Guan, a Principal Software Engineer at Linkedln (currently on sabbatical) said it all when he

Brian Guan, a Principal Software Engineer at Linkedln (currently on sabbatical) said it all when he